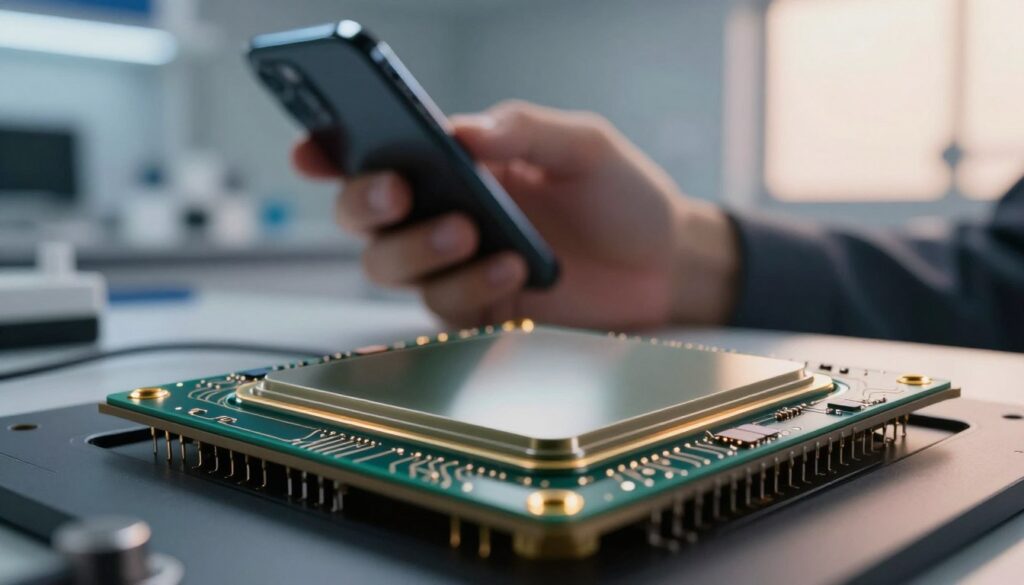

I often get asked why modern cameras capture better low-light images than older designs. I explain this from my own experience with phone and compact cameras. The shift to a back illuminated sensor design changed how light reaches the photosites and made a real difference in everyday photography.

Early adopters like OmniVision launched the OV8810 in 2008, and Sony followed in 2009 with a 5-megapixel 1.75 μm BI CMOS option at consumer prices. I remember when manufacturers used the OV5650 in the iPhone 4 to boost low-light image quality.

In short, this technology moved the wiring away from incoming light, so cameras could capture cleaner images with less noise. That practical change explains the key difference between older front-lit chips and newer designs.

Key Takeaways

- The back illuminated sensor layout improved light collection for small cameras.

- OmniVision’s OV8810 (2008) helped drive early adoption of the design.

- Sony’s 2009 5MP 1.75 μm BI CMOS brought the tech to consumers.

- Apple used OV5650 in the iPhone 4 to enhance low-light photography.

- The core difference is how wiring and photosites are arranged to favor light capture.

Understanding the Basics of Sensor Technology

At the heart of every picture is a tiny device that converts photons into charge. I focus on two core ideas: how photodiodes work and why the fill factor matters for image quality.

The Role of Photodiodes

Every digital camera relies on photodiodes to turn incoming light into electrical charge. I often note that the physical layout of these pixels affects sensitivity and noise over time.

In ccd and cmos designs, circuitry and transistor placement compete with the active surface for space. That trade-off shapes the practical range of performance for different cameras.

Defining the Fill Factor

Fill factor is the ratio of the light-sensitive area of a pixel to its total area. In older interline transfer ccds this was about 30%, so much light missed the photodiode.

Manufacturers improved efficiency by adding microlenses and changing the wafer side layout. These tweaks help focus light onto pixels and raise effective sensitivity without enlarging lenses.

| Metric | Legacy CCD | Modern CMOS |

|---|---|---|

| Typical fill factor | ~30% | 40–80% (with microlenses) |

| Circuitry impact | Shielded registers reduce light | Front-side wiring limits surface area |

| Common fixes | Larger pixels, optics | Microlenses, stacked designs |

How a Back Illuminated Sensor Improves Light Capture

Flipping the wafer during fabrication changed how much light actually reaches each pixel.

By moving the wiring behind the photosensitive layer, the chip raises the chance a photon is recorded. I’ve seen quantum efficiency jump from roughly 60% to over 90% in real devices.

This change boosts sensitivity for tiny pixels in compact cameras and phone cameras. More photons hit the active area, so the image has less noise and better dynamic range.

The architecture performs especially well where front-side wiring once blocked incoming light. Unlike typical CCD layouts, this approach sends more photons to the conversion site without enlarging the die.

“When I test modern sensors, increased light capture is the clearest upgrade to image quality.”

- Higher quantum efficiency improves low-light performance.

- Small pixels no longer suffer as much from wiring shadows.

- Manufacturers gain better performance and efficiency without bigger optics.

Comparing Performance and Manufacturing Challenges

As pixels shrank, engineers faced fresh challenges in keeping noise under control. I saw how trade-offs between speed, heat, and charge leakage became central to design choices.

Managing dark current and random noise required new approaches in wafer processing and circuitry layout. Sony’s early work, like Exmor R, helped by delivering +8 dB signaling and -2 dB noise gains.

Managing Noise and Dark Current

I found that the exposed surface can introduce interference that raises dark current. Manufacturers refined materials and thermal control to keep noise low.

Good thermal design and shielding lowered unwanted charge and preserved image quality during long exposures.

The Impact of Microlenses

Microlenses guide light into each pixel, especially when rays arrive at an angle. This preserves sharpness and raises efficiency for small pixels.

Without proper microlens design, pixels lose photons and images get softer at the edges. Keeping lenses aligned with the pixel grid matters more as resolution climbs.

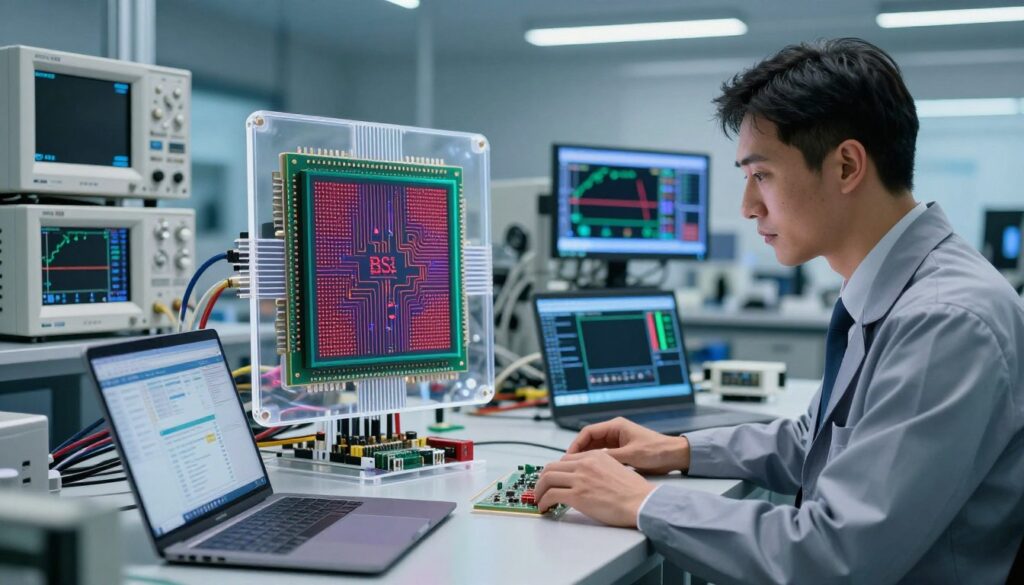

Advancements in Stacked CMOS

Stacked CMOS designs moved complex circuitry beneath the pixel layer. Sony commercialized Exmor RS in 2012, and that set the pace for higher readout speeds.

More recently, high-end cameras like the Nikon D850 (45.7 MP), the Fujifilm X-T3 (26.1 MP), and Canon’s EOS R3 show how stacked chips boost performance in real products.

| Challenge | Traditional CCD/Front-side | Stacked CMOS / Modern |

|---|---|---|

| Noise control | Lower circuitry noise but limited speed | Higher speed, requires advanced shielding |

| Readout speed | Modest rates | Very fast with on-die processing |

| Manufacturing yield | Proven but larger pixels | More complex, lower initial yields |

| Light efficiency | Improved with microlenses | High efficiency, but needs precise fabrication |

In my experience, the gains in sensitivity and performance outweigh the extra manufacturing time. Still, manufacturers must balance cost, yield, and final image quality to deliver reliable cameras to users.

Final Thoughts on Choosing the Right Camera Sensor

When I choose a camera today, I focus on how its chip will perform in real shooting conditions.

Choosing the right sensor depends on your needs. For low-light work, a modern back-illuminated design often gives better light capture than older CCD chips.

If you need fast readout and on-board processing, look for stacked CMOS sensors. They boost performance and help with high frame rates and complex image tasks.

I recommend weighing pixel size, microlens alignment, and lens choice before you buy. Manufacturers like Sony and Fujifilm still lead in resolution and dynamic range.

In the end, the difference in image quality should guide your camera choice more than specs alone.

FAQ

What is the main difference between Back-Illuminated (BSI) sensors and standard CMOS sensors?

I explain it simply: BSI moves the metal wiring and circuitry to the back side of the chip so light hits the photodiodes more directly. That improves light collection and sensitivity compared with traditional front-side designs, which let wiring block some incoming light. The result is better low-light performance and less noise for the same pixel size.

How do photodiodes affect sensor performance?

Photodiodes convert photons from the lens into electrical charges. I look at their size and efficiency because larger, well-designed photodiodes gather more light and raise the signal-to-noise ratio. That directly impacts image quality, especially in low light and high dynamic range scenes.

What does "fill factor" mean and why is it important?

Fill factor refers to the portion of each pixel that is sensitive to light versus occupied by transistors and wiring. I care about a high fill factor because it means more active area to capture photons, improving brightness and reducing the need to boost ISO, which keeps noise lower in final images.

How does a BSI design improve light capture compared to standard layouts?

By placing light-sensitive elements closer to the incoming light path and relocating circuitry behind them, BSI increases the effective fill factor. I notice clearer details and cleaner shadows in pictures taken at higher ISO settings because photons have a more direct path to the photodiodes.

Does using a BSI sensor reduce image noise?

Yes, in many cases it does. Since the design captures more photons per pixel, I can use lower gain for the same exposure, which cuts electronic noise. That helps especially with small-pixel sensors found in modern mirrorless cameras and smartphones.

How do microlenses influence sensor efficiency?

Microlenses sit above each pixel to focus light onto the photodiode. I find they boost effective sensitivity by directing oblique rays into the active area. Combined with BSI architecture, microlenses can significantly improve low-light throughput and color consistency across the frame.

What manufacturing challenges do BSI sensors present?

BSI fabrication requires wafer thinning, precision alignment, and careful handling to avoid damage. I know manufacturers must control defects and yield to keep costs reasonable. These steps add complexity compared with conventional CMOS processes.

How does managing noise and dark current differ between sensor types?

Dark current comes from silicon defects and thermal effects. I mitigate it with better pixel design, lower operating temperatures, and process improvements. BSI reduces some noise sources by improving photon collection, but manufacturers still address dark current and read noise through circuit tweaks and cooling in high-end cameras.

What are stacked CMOS sensors and why do they matter?

Stacked CMOS separates the pixel layer from the processing and memory layers. I appreciate that this lets designers add faster readout circuits and more on-chip features without enlarging pixels. When stacked designs incorporate BSI pixels, they deliver high speed, improved dynamic range, and smarter image processing.

Will switching to a BSI sensor always make my camera better?

Not always. I consider the whole system: lens quality, pixel size, image processor, and noise management. BSI gives a meaningful boost in sensitivity and low-light behavior, but optical elements and signal processing determine the final image more than sensor architecture alone.

How do lens choices interact with sensor performance?

Lens sharpness, aperture, and transmission dictate how many photons reach the sensor. I recommend pairing high-transmission lenses with modern sensors to maximize the benefits of improved quantum efficiency and lower noise. A great sensor can’t fully compensate for a poor lens.

Are there trade-offs in pixel size when choosing sensors?

Yes. Smaller pixels let you pack more resolution into a chip but gather fewer photons each, which can raise noise. I weigh resolution against per-pixel sensitivity. BSI and microlenses help small pixels perform better, but larger pixels still excel in pure light-gathering capability.

Which manufacturers lead in BSI and advanced CMOS technology?

Several major companies push sensor innovations, including Sony, Samsung, and OmniVision. I look at their roadmaps for stacked designs, improved quantum efficiency, and lower noise to gauge which cameras will benefit most from these technologies.

How does sensor technology affect dynamic range?

Dynamic range depends on the maximum charge a pixel can hold (full-well capacity) and the noise floor. I find that pixel design, conversion gain, and readout circuitry influence both. BSI helps by collecting more light, effectively improving usable signal and thus perceived dynamic range in many shooting conditions.

Can firmware or processing compensate for sensor limitations?

Processing can do a lot—noise reduction, HDR blending, and smart tone mapping improve results from modest sensors. I rely on modern image processors to extract detail and reduce artifacts, but they can’t create photons. Better optics and sensor efficiency always give processors more data to work with.

Ryan Mercer is a camera sensor specialist and imaging technology researcher with a deep focus on CMOS and next-generation sensor design. He translates complex technical concepts into clear, practical insights, helping readers understand how sensor performance impacts image quality, dynamic range, and low-light capabilities.